When You Say Nothing At All

Cornell University invention detects voice commands silently; Uniphore joins growing voice/AI unicorn club

Songwriters Paul Overstreet and Don Schlitz are Nashville royalty, having been awarded countless honors over lengthy careers. They’ve written more #1 hits combined than almost anyone alive.

Toward the end of a long and seemingly unproductive day of writing together, they came up with When You Say Nothing At All. They didn’t think much of it until country star Keith Whitley heard it and thought so highly of it, he had to record it. The song rose to the top of the country charts in 1988.

In 1995, when Keith Whitley passed away, Alison Krauss recorded it as a tribute to him. That version exploded in popularity, virally rising to the top of the charts despite zero efforts from Alison Krauss and Union Station’s team to promote it.

Later on, in 1999, Irish songwriter Ronan Keating would record the song for the soundtrack of feature film Notting Hill. The song reached #1 in several countries, including the UK, Ireland, and New Zealand.

Where the story gets even more interesting, from a voice/AI perspective: when Krauss’ version came out in 1995, Mike Cromwell, a longtime radio guy up in Milwaukee, decided to mash up the original Whitley version with the newer version from Alison Krauss and Union Station.

This version, haunting given Whitley’s death in 1989 (which was the reason for the Krauss version in the first place), instantly elicited a significant emotional reaction from listeners.

This duet exploded, itself being played on country radio from coast to coast almost an entire year that followed, despite never being released commercially.

Voice tech’s ascension has led to open pondering about how certain types of queries, more private in nature, would be conducted in public.

For example, how someone riding on the subway might ask Alexa to check their bank balance. You’re not going to feel comfortable saying that out loud, nor would you want the information spoken out loud either, if you’re among other people.

Hearables solve the second part - you can get the answer in your AirPods or otherwise in such a way where others can’t hear it.

But the first part has remained a mystery, until now.

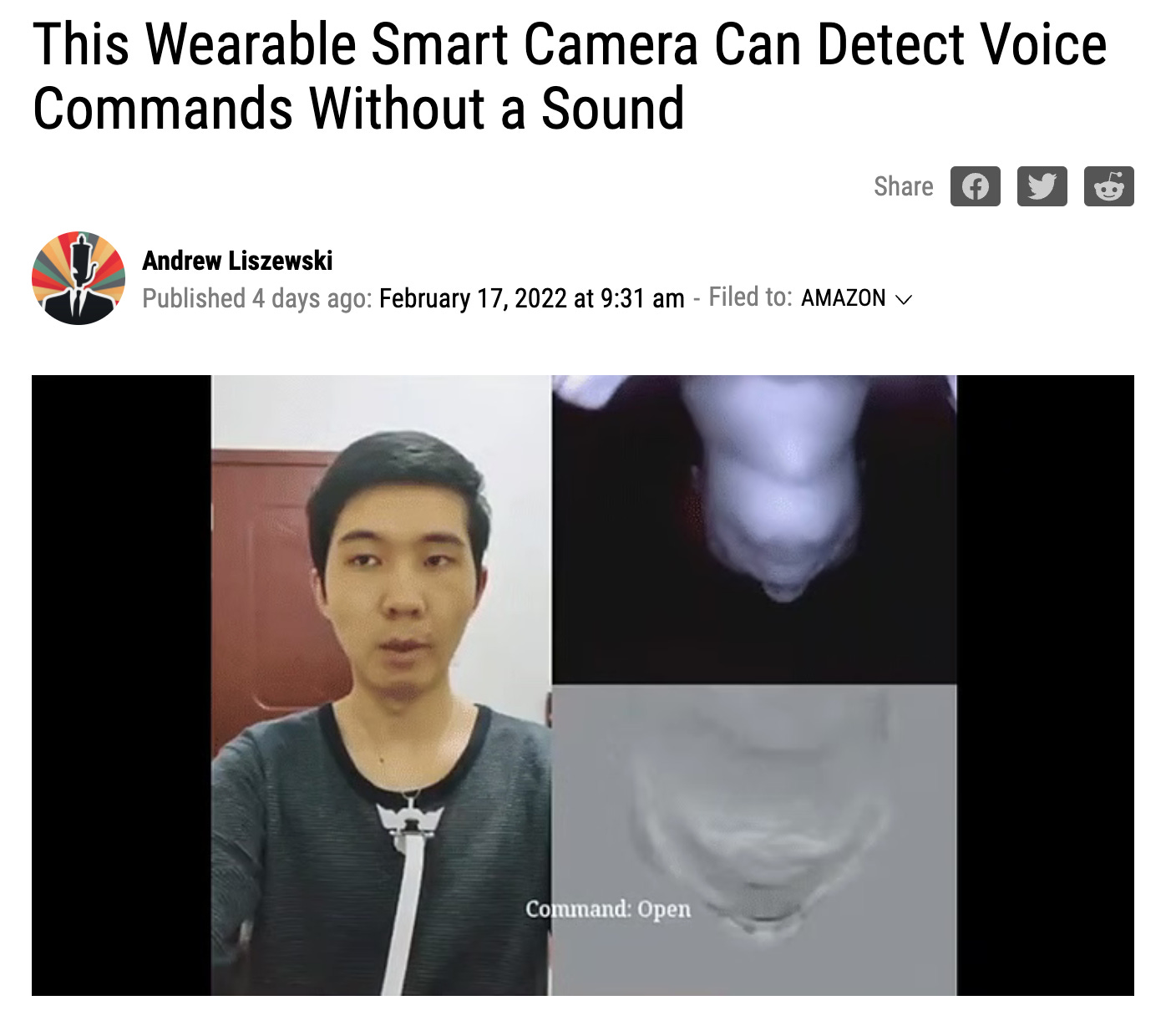

A team of researchers at Cornell University created the SpeeChin, which is a small wearable camera on a necklace that looks up at your head and face, and is capable of determining what words you’re saying even with no vocal output.

So not only is something like this immediately usable to bring conversational AI to numerous new contexts where privacy was previously a constraint, but it also opens up voice technology to those who are mute or who have other vocal impediments.

In what is becoming such a regular occurrence that I’ve now created a video series for it appropriately titled Anotha One), we welcome the team at Uniphore to the ranks of voice/AI unicorns.

In raising $400M on a valuation of $2.5B, Uniphore has taken what began in 2008 as a journey of just a couple of entrepreneurs to now become one of the biggest voice/AI companies on the planet.

In the video linked above, I recount my first memory of encountering Uniphore back in 2019 at Opus Research’s Conversational Commerce Conference. Fascinating to see how they shared top billing at the conference alongside Nuance - both of which have gone on to soaring heights.

Just joined the program for the #1 event for voice/AI in America: Amazon, Speechmatics, Quantiphi, Veritone, DataForce, Symbl.ai

Previously announced: Washington Post, National Geographic, Verizon, Deepgram HEAD acoustics, Bespoken, Atexto, Lenovo, Amazethu, Canary Speech, Audiobrain, Just AI, Speechly, Open Voice Network, Lundy, ReadSpeaker, Project Voice Capital Partners, Microsoft, SoundHound, and many others

You can book the preferred hotel here (which is attached to the convention center), and you can register for Project Voice 2022 here.

Attendance is capped at 750 executives.