The Ensemble Listening Model

Boston-based Modulate AI, best known for voice moderation within Call of Duty and GTA Online, unveils 'multi-dimensional' enterprise voice architecture

Modulate is a venture-backed voice AI startup in Boston that first made a name for themselves within the massively multiplayer video game world, as their AI tools were used for moderating communication within the Call of Duty and Grand Theft Auto franchises.

Having conquered the entertainment world, they expanded to support contact center operations in various fields, zeroing in on banking and insurance.

Earlier today, they released an entirely new architecture called the Ensemble Listening Model (ELM), which eschews ‘flattening’ voice into a transcript, and thereby losing a large degree of nuance, and instead orchestrates numerous models all in one singular effort to more fully interpret real-world conversations.

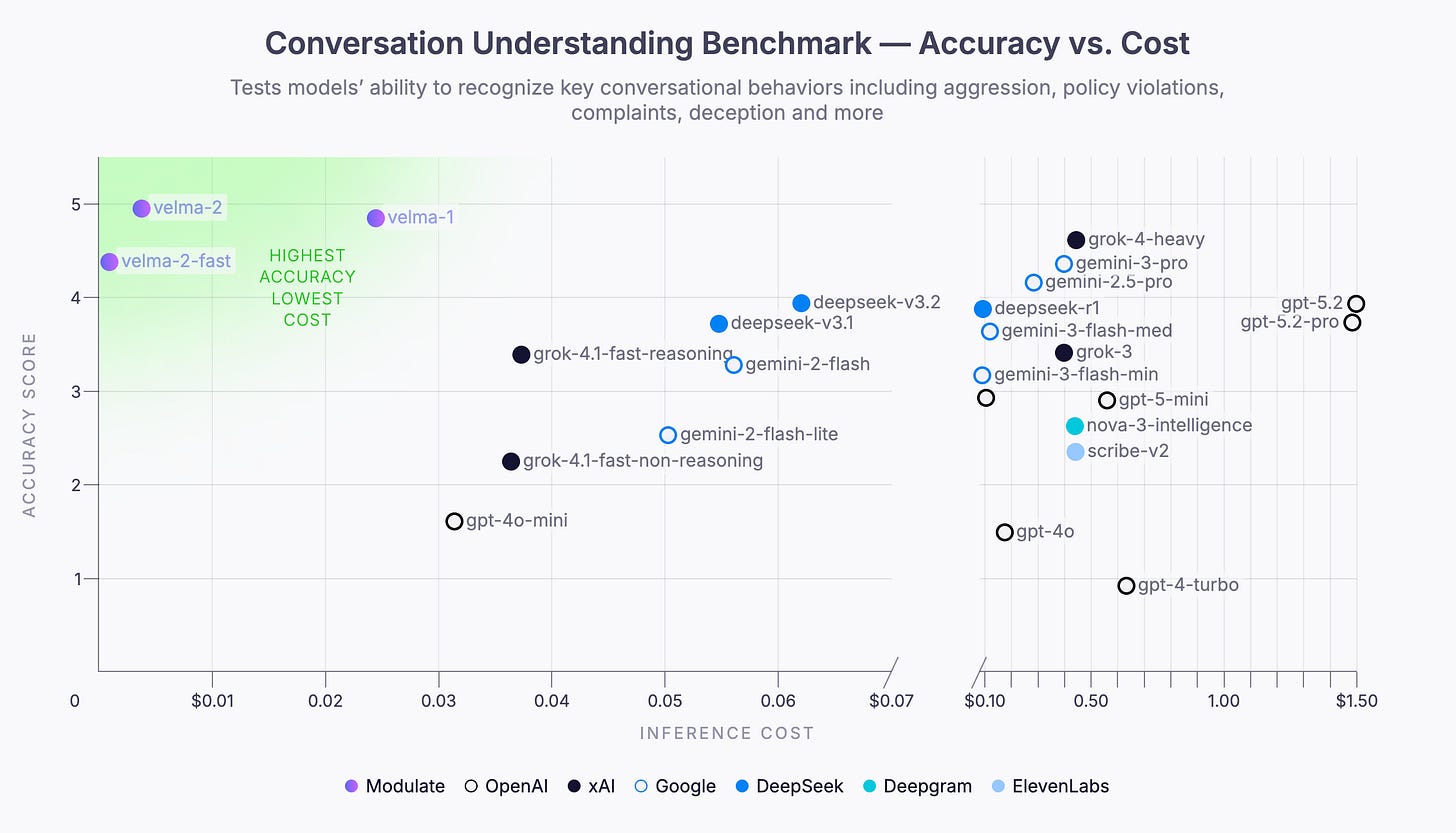

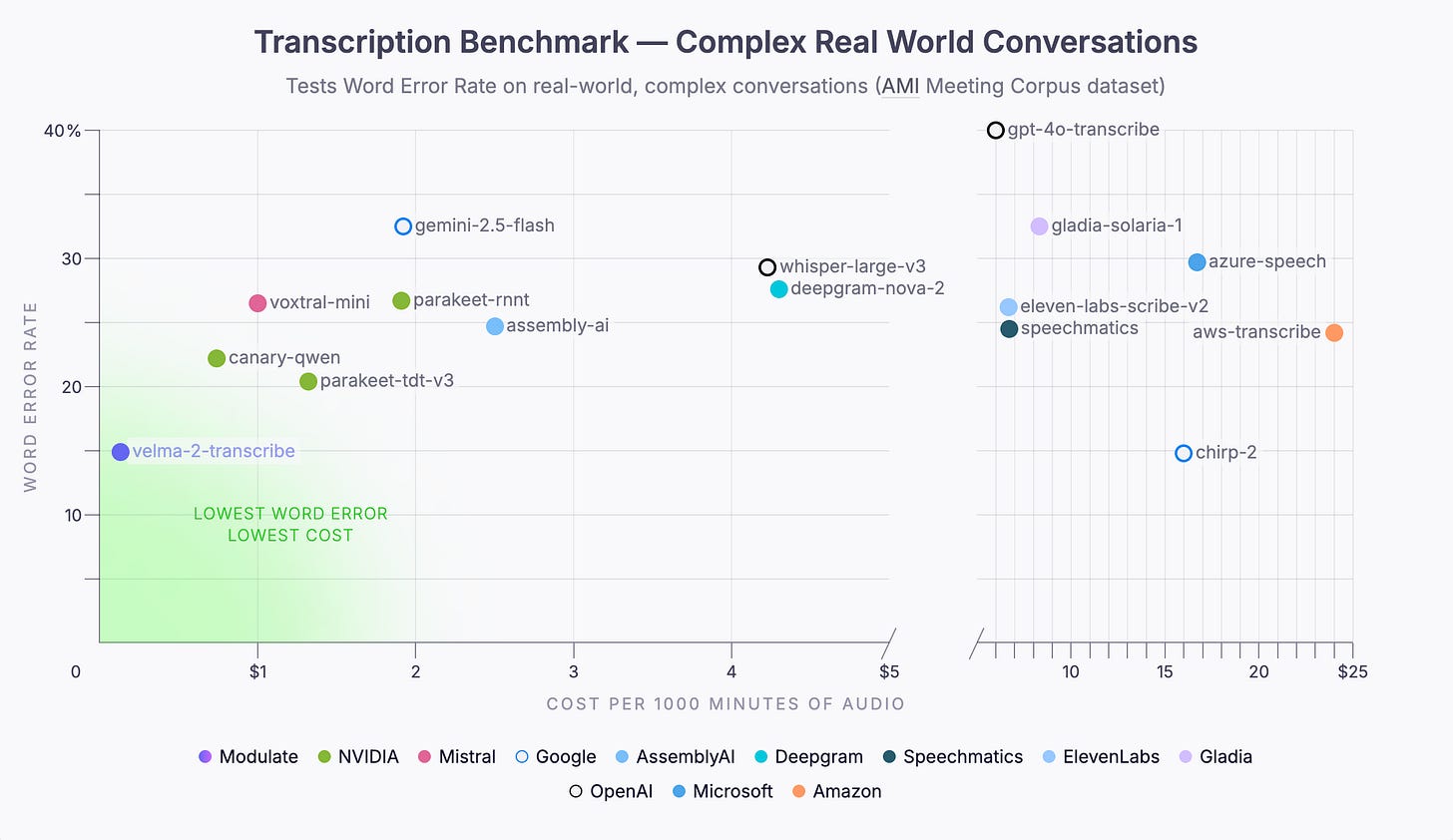

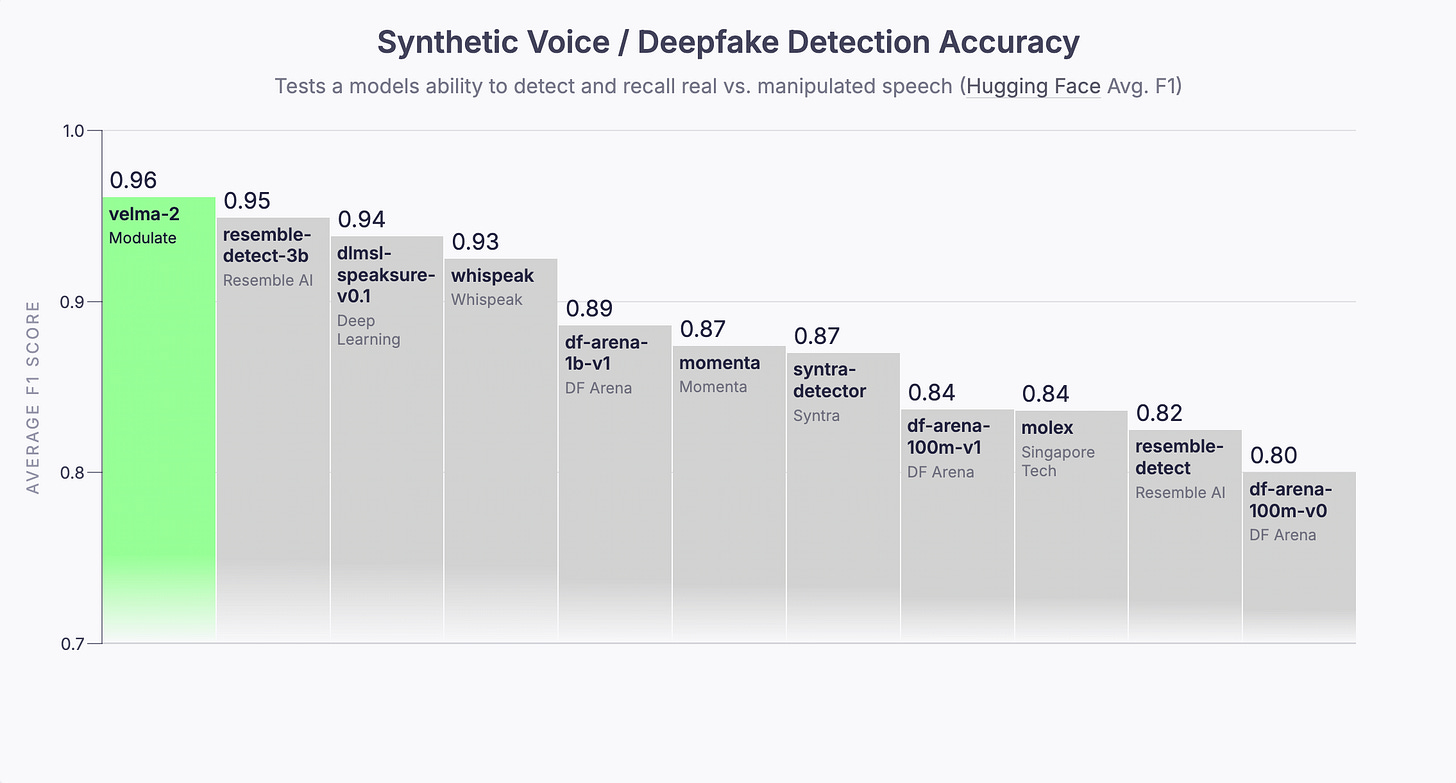

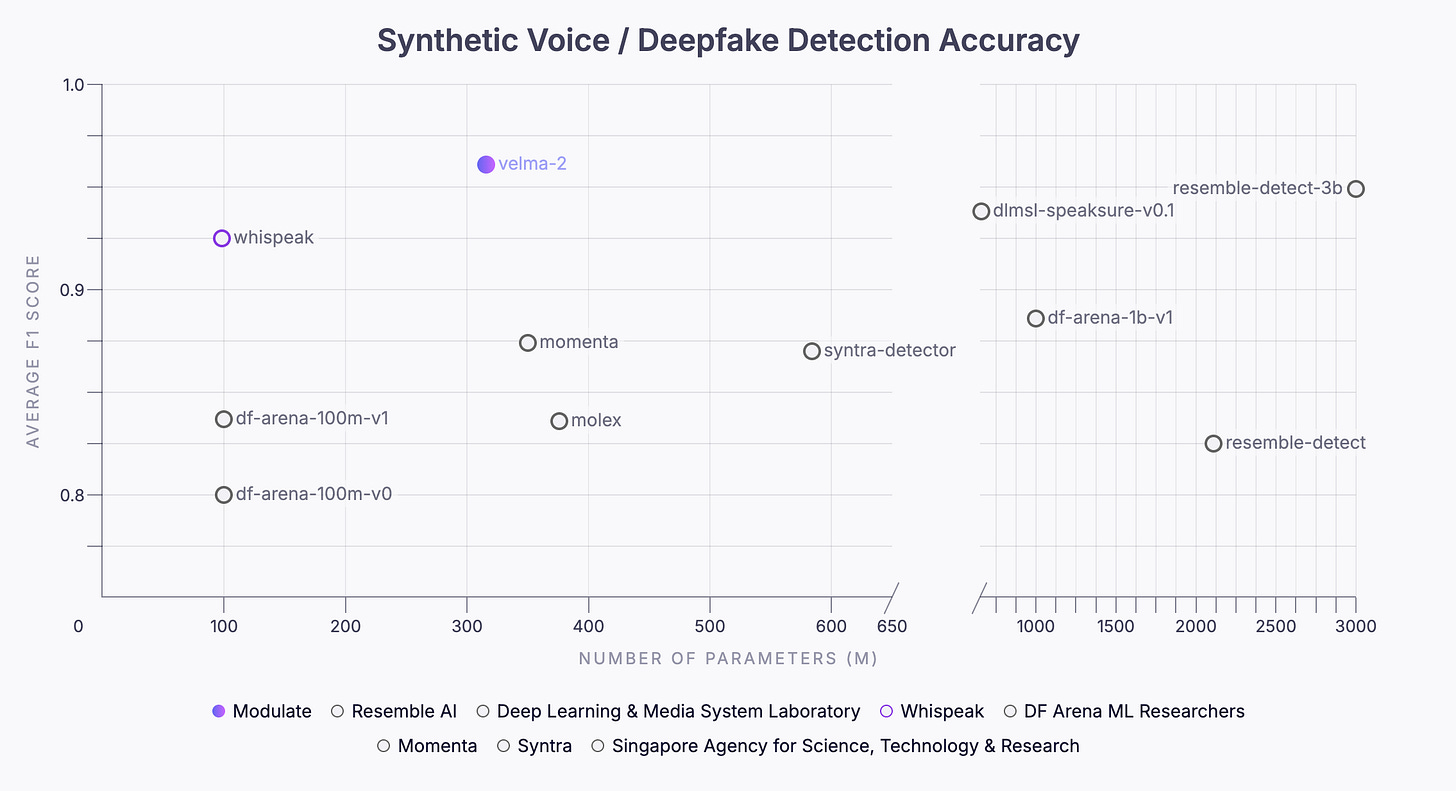

Modulate goes further to demonstrate how its new ELM, called Velma 2.0, outperforms everybody from OpenAI to Gemini to ElevenLabs.

I am including the company’s press release here - it is worth reading.

But even more interesting are the accompanying comparative graphics, all four of which I am including below.

You can learn more about their research and findings at EnsembleListeningModel.com.